Setup AWS Batch using CloudFormation#

This describes the deployment code that sets up the necessary AWS resources to utilize the AWS Batch runner. Using Batch is advantageous because it starts and stops instances automatically and runs jobs sequentially or in parallel according to the dependencies between them. In addition, this deployment sets up distinct CPU and GPU resources and utilizes spot instances, which is more cost-effective than always using a GPU on-demand instance. Deployment is driven via the AWS console using a CloudFormation template.

This AWS Batch setup is an “advanced” option that assumes some familiarity with Docker, AWS IAM, named profiles, availability zones, EC2, ECR, CloudFormation, and Batch.

AWS Account Setup#

In order to setup Batch using this repo, you will need to setup your AWS account so that:

you have either root access to your AWS account, or an IAM user with admin permissions. It is probably possible with less permissions, but we haven’t figured out how to do this yet after some experimentation.

you have the ability to launch P2 or P3 instances which have GPUs.

you have requested permission from AWS to use availability zones outside the USA if you would like to use them. (New AWS accounts can’t launch EC2 instances in other AZs by default.) If you are in doubt, just use

us-east-1.

AWS Credentials#

Using the AWS CLI, create an AWS profile for the target AWS environment. An example, naming the profile raster-vision:

$ aws --profile raster-vision configure

AWS Access Key ID [****************F2DQ]:

AWS Secret Access Key [****************TLJ/]:

Default region name [us-east-1]: us-east-1

Default output format [None]:

You will be prompted to enter your AWS credentials, along with a default region. The Access Key ID and Secret Access Key can be retrieved from the IAM console. These credentials will be used to authenticate calls to the AWS API when using Packer and the AWS CLI.

Deploying Batch resources#

To deploy AWS Batch resources using AWS CloudFormation, start by logging into your AWS console. Then, follow the steps below:

Navigate to

CloudFormation > Create StackIn the

Choose a template field, selectUpload a template to Amazon S3and upload the template in cloudformation/template.yml.Prefix: If you are setting up multiple RV stacks within an AWS account, you need to set a prefix for namespacing resources. Otherwise, there will be name collisions with any resources that were created as part of another stack.Specify the following required parameters:

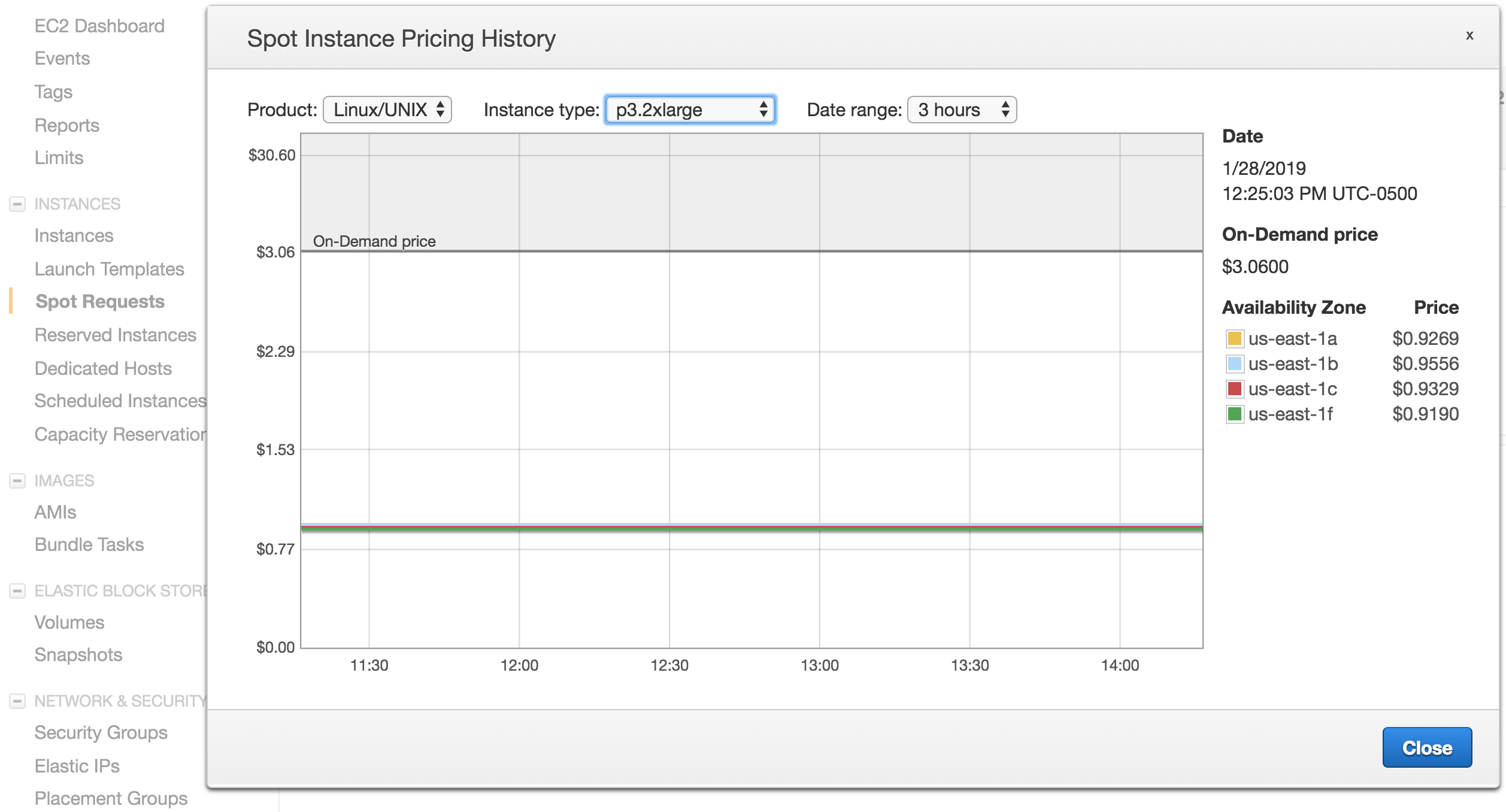

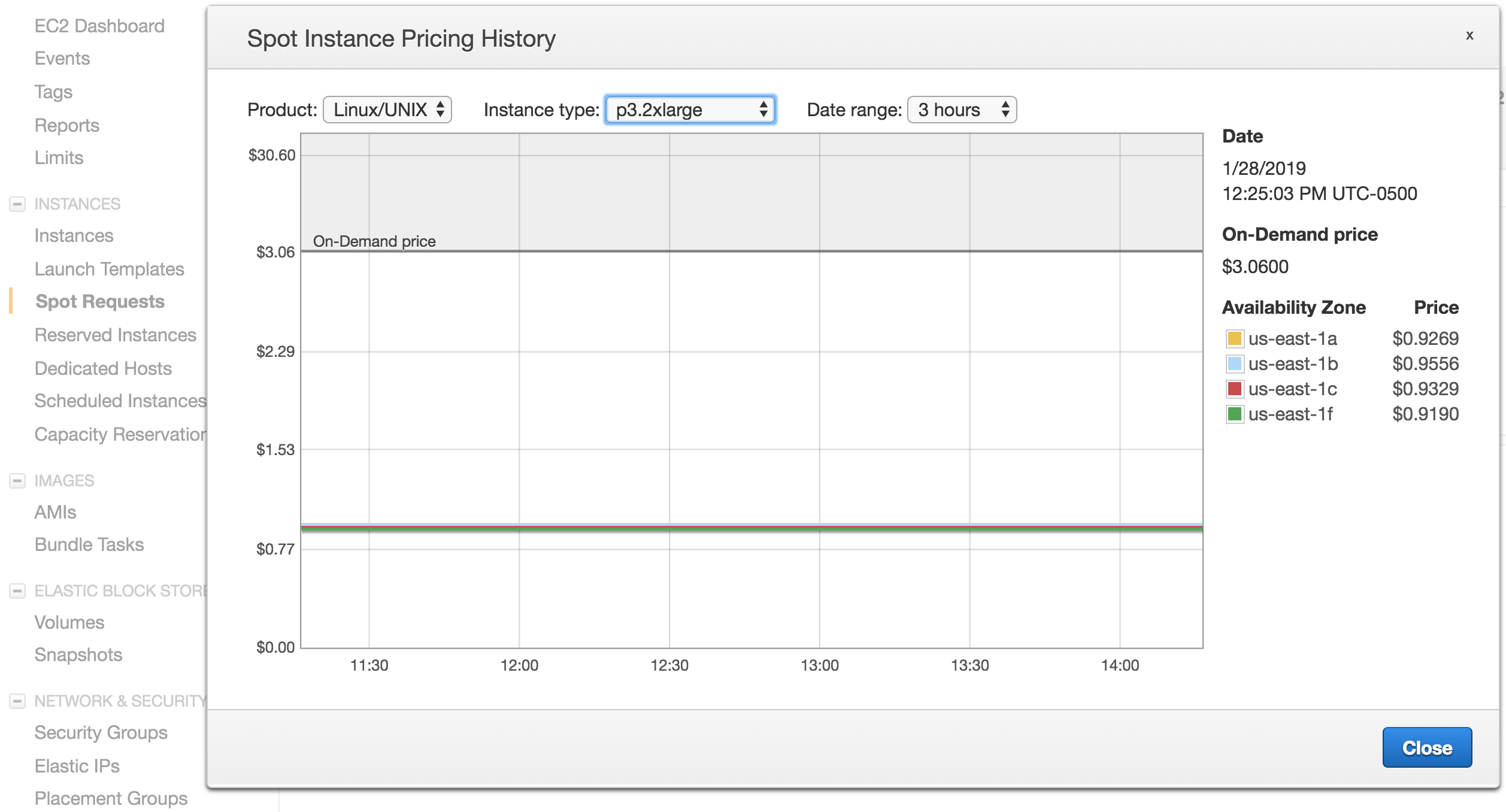

Stack Name: The name of your CloudFormation stackVPC: The ID of the Virtual Private Cloud in which to deploy your resource. Your account should have at least one by default.Subnets: The ID of any subnets that you want to deploy your resources into. Your account should have at least two by default; make sure that the subnets you select are in the VPC that you chose by using the AWS VPC console, or else CloudFormation will throw an error. (Subnets are tied to availability zones, and so affect spot prices.) In addition, you need to choose subnets that are available for the instance type you have chosen. To find which subnets are available, go to Spot Pricing History in the EC2 console and select the instance type. Then look up the availability zones that are present in the VPC console to find the corresponding subnets. Your spot requests will be more likely to be successful and your savings will be greater if you have subnets in more availability zones.

SSH Key Name: The name of the SSH key pair you want to be able to use to shell into your Batch instances. If you’ve created an EC2 instance before, you should already have one you can use; otherwise, you can create one in the EC2 console. Note: If you decide to create a new one, you will need to log out and then back in to the console before creating a Cloudformation stack using this key.Instance Types: Provide the instance types you would like to use. (For GPUs,p3.2xlargeis approximately 4 times the speed for 4 times the price.)

Adjust any preset parameters that you want to change (the defaults should be fine for most users) and click

Next.Note

Advanced users: If you plan on modifying Raster Vision and would like to publish a custom image to run on Batch, you will need to specify an ECR repo name and a tag name. Note that the repo names cannot be the same as the Stack name (the first field in the UI) and cannot be the same as any existing ECR repo names. If you are in a team environment where you are sharing the AWS account, the repo names should contain an identifier such as your username.

Accept all default options on the

Optionspage and clickNext.Accept “I acknowledge that AWS CloudFormation might create IAM resources with custom names” on the

Reviewpage and clickCreate.Watch your resources get deployed!

Publish local Raster Vision images to ECR#

If you setup ECR repositories during the CloudFormation setup (the “advanced user” option), then you will need to follow this step, which publishes local Raster Vision images to those ECR repositories. Every time you make a change to your local Raster Vision images and want to use those on Batch, you will need to run these steps:

Run

./docker/buildin the Raster Vision repo to build a local copy of the Docker image.Run

./docker/ecr_publishin the Raster Vision repo to publish the Docker images to ECR. Note that this requires setting theRV_ECR_IMAGEenvironment variable to be set to<ecr_repo_name>:<tag_name>.

Update Raster Vision configuration#

Finally, make sure to update your Running on AWS Batch with the Batch resources that were created.

Deploy new job definitions#

When a user starts working on a new RV-based project (or a new user starts working on an existing RV-based project), they will often want to publish a custom Docker image to ECR and use it when running on Batch. To facilitate this, there is a separate cloudformation/job_def_template.yml. The idea is that for each user/project pair which is identified by a Namespace string, a CPU and GPU job definition is created which point to a specified ECR repo using that Namespace as the tag. After creating these new resources, the image should be published to <repo>:<namespace> on ECR, and the new job definitions should be placed in a project-specific RV profile file.