Note

This page was generated from pred_and_eval_ss.ipynb.

Note

If running outside of the Docker image, you may need to set some environment variables manually. You can do it like so:

import os

from subprocess import check_output

os.environ['GDAL_DATA'] = check_output('pip show rasterio | grep Location | awk \'{print $NF"/rasterio/gdal_data/"}\'', shell=True).decode().strip()

We will be accessing files on S3 in this notebook. Since those files are public, we set the AWS_NO_SIGN_REQUEST to tell rasterio to skip the sign-in.

[ ]:

%env AWS_NO_SIGN_REQUEST=YES

Prediction and Evaluation#

Load a Learner with a trained model from bundle – Learner.from_model_bundle()#

[1]:

bundle_uri = 's3://azavea-research-public-data/raster-vision/examples/model-zoo-0.30/spacenet-vegas-buildings-ss/train/model-bundle.zip'

[2]:

from rastervision.pytorch_learner import SemanticSegmentationLearner

learner = SemanticSegmentationLearner.from_model_bundle(bundle_uri, training=False)

2024-04-10 14:29:44:rastervision.pytorch_learner.learner: INFO - Loading learner from bundle s3://azavea-research-public-data/raster-vision/examples/model-zoo-0.30/spacenet-vegas-buildings-ss/train/model-bundle.zip.

2024-04-10 14:29:44:rastervision.pipeline.file_system.utils: INFO - Downloading s3://azavea-research-public-data/raster-vision/examples/model-zoo-0.30/spacenet-vegas-buildings-ss/train/model-bundle.zip to /opt/data/tmp/cache/s3/azavea-research-public-data/raster-vision/examples/model-zoo-0.30/spacenet-vegas-buildings-ss/train/model-bundle.zip...

2024-04-10 14:29:53:rastervision.pytorch_learner.learner: INFO - Unzipping model-bundle to /opt/data/tmp/tmpohx2fqli/model-bundle

Downloading: "https://download.pytorch.org/models/resnet50-11ad3fa6.pth" to /root/.cache/torch/hub/checkpoints/resnet50-11ad3fa6.pth

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 97.8M/97.8M [00:02<00:00, 42.7MB/s]

2024-04-10 14:29:58:rastervision.pytorch_learner.learner: INFO - Loading model weights from: /opt/data/tmp/tmpohx2fqli/model-bundle/model.pth

Note

If you used a custom model instead of using ModelConfig while training, you will need to initialize that model again and pass it to Learner.from_model_bundle(). See the Training a model tutorial for an example.

Get scene to predict#

[3]:

scene_id = 5631

image_uri = f's3://spacenet-dataset/spacenet/SN2_buildings/train/AOI_2_Vegas/PS-RGB/SN2_buildings_train_AOI_2_Vegas_PS-RGB_img{scene_id}.tif'

label_uri = f's3://spacenet-dataset/spacenet/SN2_buildings/train/AOI_2_Vegas/geojson_buildings/SN2_buildings_train_AOI_2_Vegas_geojson_buildings_img{scene_id}.geojson'

[4]:

from rastervision.core.data import ClassConfig

class_config = ClassConfig(

names=['building', 'background'],

colors=['orange', 'black'])

class_config.ensure_null_class()

Load stats that will be used to normalize the images before they are fed into the model:

[14]:

from rastervision.core.data import StatsTransformer

stats_uri = 's3://azavea-research-public-data/raster-vision/examples/model-zoo-0.30/spacenet-vegas-buildings-ss/analyze/stats/train_scenes/stats.json'

stats_tf = StatsTransformer.from_stats_json(stats_uri)

stats_tf

[14]:

StatsTransformer(means=array([424.77525978, 593.13316996, 447.48274874]), stds=array([221.28096132, 244.1374012 , 149.42471905]), max_stds=3.0)

[38]:

from rastervision.pytorch_learner import SemanticSegmentationSlidingWindowGeoDataset

import albumentations as A

ds = SemanticSegmentationSlidingWindowGeoDataset.from_uris(

class_config=class_config,

image_uri=image_uri,

image_raster_source_kw=dict(raster_transformers=[stats_tf]),

size=325,

stride=325,

out_size=325,

)

2024-04-10 15:30:41:rastervision.pipeline.file_system.utils: INFO - Using cached file /opt/data/tmp/cache/s3/spacenet-dataset/spacenet/SN2_buildings/train/AOI_2_Vegas/PS-RGB/SN2_buildings_train_AOI_2_Vegas_PS-RGB_img5631.tif.

Predict – Learner.predict_dataset()#

Make predictions via Learner.predict_dataset() and then turn them into Labels via Labels.from_predictions() (specifically, SemanticSegmentationLabels.from_predictions()).

[39]:

from rastervision.core.data import SemanticSegmentationLabels

predictions = learner.predict_dataset(

ds,

raw_out=True,

numpy_out=True,

predict_kw=dict(out_shape=(325, 325)),

progress_bar=True)

pred_labels = SemanticSegmentationLabels.from_predictions(

ds.windows,

predictions,

smooth=True,

extent=ds.scene.extent,

num_classes=len(class_config))

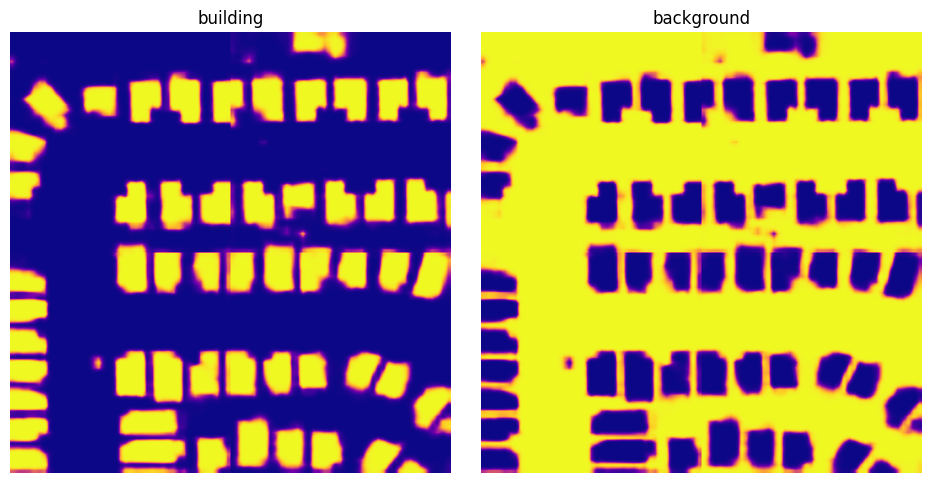

Visualize predictions#

pred_labels is an instance of SemanticSegmentationSmoothLabels which is a raster of probability distributions for each pixel for the entire scene. We can get these probabilities via SemanticSegmentationSmoothLabels.get_score_arr().

Note

There is also a SemanticSegmentationSmoothLabels.get_label_arr() method that will return a 2D raster of class IDs representing the most probable class for each pixel.

[40]:

scores = pred_labels.get_score_arr(pred_labels.extent)

[41]:

from matplotlib import pyplot as plt

scores_building = scores[0]

scores_background = scores[1]

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(10, 5))

fig.tight_layout(w_pad=-2)

ax1.imshow(scores_building, cmap='plasma')

ax1.axis('off')

ax1.set_title('building')

ax2.imshow(scores_background, cmap='plasma')

ax2.axis('off')

ax2.set_title('background')

plt.show()

Save predictions to file – SemanticSegmentationSmoothLabels.save()#

[21]:

pred_labels.save(

uri=f'./spacenet-vegas-buildings-ss/predict/{scene_id}',

crs_transformer=ds.scene.raster_source.crs_transformer,

class_config=class_config)

Evaluate predictions#

We now want to evaluate the predictions against the ground truth labels.

Raster Vision allows us to do this via an Evaluator. In our case, this would be the SemanticSegmentationEvaluator. We are going to use its SemanticSegmentationEvaluator.evaluate_predictions() method, which takes both ground truth labels and predictions as Labels objects.

We already have the predictions as a SemanticSegmentationLabels object, so we just need to load the ground truth labels as SemanticSegmentationLabels too. We do that by using make_ss_scene() factory function to create a scene and then accessing scene.label_source.get_labels(). Alternatively, we could have directly created a SemanticSegmentationLabelSource.

[22]:

from rastervision.core.data.utils import make_ss_scene

scene = make_ss_scene(

class_config=class_config,

image_uri=image_uri,

label_vector_uri=label_uri,

label_vector_default_class_id=class_config.get_class_id('building'),

label_raster_source_kw=dict(

background_class_id=class_config.get_class_id('background')),

image_raster_source_kw=dict(allow_streaming=True))

gt_labels = scene.label_source.get_labels()

Warning 1: HTTP response code on https://spacenet-dataset.s3.amazonaws.com/spacenet/SN2_buildings/train/AOI_2_Vegas/PS-RGB/SN2_buildings_train_AOI_2_Vegas_PS-RGB_img5631.tif.msk: 403

Warning 1: HTTP response code on https://spacenet-dataset.s3.amazonaws.com/spacenet/SN2_buildings/train/AOI_2_Vegas/PS-RGB/SN2_buildings_train_AOI_2_Vegas_PS-RGB_img5631.tif.MSK: 403

2024-04-10 14:58:09:rastervision.pipeline.file_system.utils: INFO - Using cached file /opt/data/tmp/cache/s3/spacenet-dataset/spacenet/SN2_buildings/train/AOI_2_Vegas/geojson_buildings/SN2_buildings_train_AOI_2_Vegas_geojson_buildings_img5631.geojson.

Note

gt_labels is an instance of SemanticSegmentationDiscreteLabels. You can convert it to a label raster (for visualization or some other analysis) via SemanticSegmentationDiscreteLabels.get_label_arr().

[23]:

from rastervision.core.evaluation import SemanticSegmentationEvaluator

evaluator = SemanticSegmentationEvaluator(class_config)

evaluation = evaluator.evaluate_predictions(

ground_truth=gt_labels, predictions=pred_labels)

SemanticSegmentationEvaluator.evaluate_predictions() returns a SemanticSegmentationEvaluation object which contains evaluations for each class as ClassEvaluationItem objects.

We can examine these evaluations as shown below.

Evaluation for the building class:

[24]:

evaluation.class_to_eval_item[0]

[24]:

{'class_id': 0,

'class_name': 'building',

'conf_mat': [[289148.0, 12106.0], [9922.0, 111324.0]],

'conf_mat_dict': {'FN': 9922.0, 'FP': 12106.0, 'TN': 289148.0, 'TP': 111324.0},

'conf_mat_frac': [[0.6843739644970415, 0.028653254437869823],

[0.023484023668639054, 0.2634887573964497]],

'conf_mat_frac_dict': {'FN': 0.023484023668639054,

'FP': 0.028653254437869823,

'TN': 0.6843739644970415,

'TP': 0.2634887573964497},

'count_error': 2184.0,

'gt_count': 121246.0,

'metrics': {'f1': 0.9099707368111298,

'precision': 0.9019201166653164,

'recall': 0.9181663724988866,

'sensitivity': 0.9181663724988866,

'specificity': 0.9598146414653416},

'pred_count': 123430.0,

'relative_frequency': 0.2869727810650888}

Evaluation for the background class:

[25]:

evaluation.class_to_eval_item[1]

[25]:

{'class_id': 1,

'class_name': 'background',

'conf_mat': [[111324.0, 9922.0], [12106.0, 289148.0]],

'conf_mat_dict': {'FN': 12106.0, 'FP': 9922.0, 'TN': 111324.0, 'TP': 289148.0},

'conf_mat_frac': [[0.2634887573964497, 0.023484023668639054],

[0.028653254437869823, 0.6843739644970415]],

'conf_mat_frac_dict': {'FN': 0.028653254437869823,

'FP': 0.023484023668639054,

'TN': 0.2634887573964497,

'TP': 0.6843739644970415},

'count_error': 2184.0,

'gt_count': 301254.0,

'metrics': {'f1': 0.9633064811668367,

'precision': 0.9668238205102484,

'recall': 0.9598146414653416,

'sensitivity': 0.9598146414653416,

'specificity': 0.9181663724988866},

'pred_count': 299070.0,

'relative_frequency': 0.7130272189349113}

Save evaluation#

We can also save the evaluations as a JSON via SemanticSegmentationEvaluation.save()

[26]:

evaluation.save(f'eval-{scene_id}.json')